Sony RX10 III: About 10 to 12 bit Gradation, Perhaps 11-Bit Range: 10-Bit in General

Get Sony DSC-RX10 III (and Sony RX100 IV) at B&H Photo, and see my Sony mirrorless wishlist.

See my review of the Sony RX10 III.

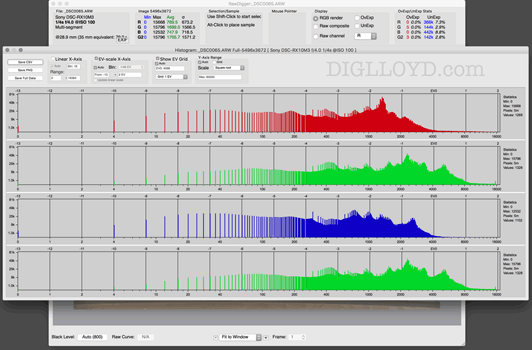

This post rewritten, as my initial analysis was incorrect; the overall histogram fooled me. I've prepared an in-depth analysis using a variety of RawDigger histograms in:

Sony RX10 III Gradation and Bit Depth

Well, the Sony RX10 III files look great under most conditions, and that is what counts. How gradation and bit depth work out in 'special circumstances' is less clear. Given the small sensor, I’m still impressed with the look of the images. And it might be that the very small photosites really cannot offer any real 14-bit mode in any case—the RX10 III does not offer uncompressed raw.

Note also that using Long Exposure Noise Reduction (when it occurs) may cut the range even further (not verified, but the Sony A7R II does that).

Alex Tutubalin of RawDigger writes:

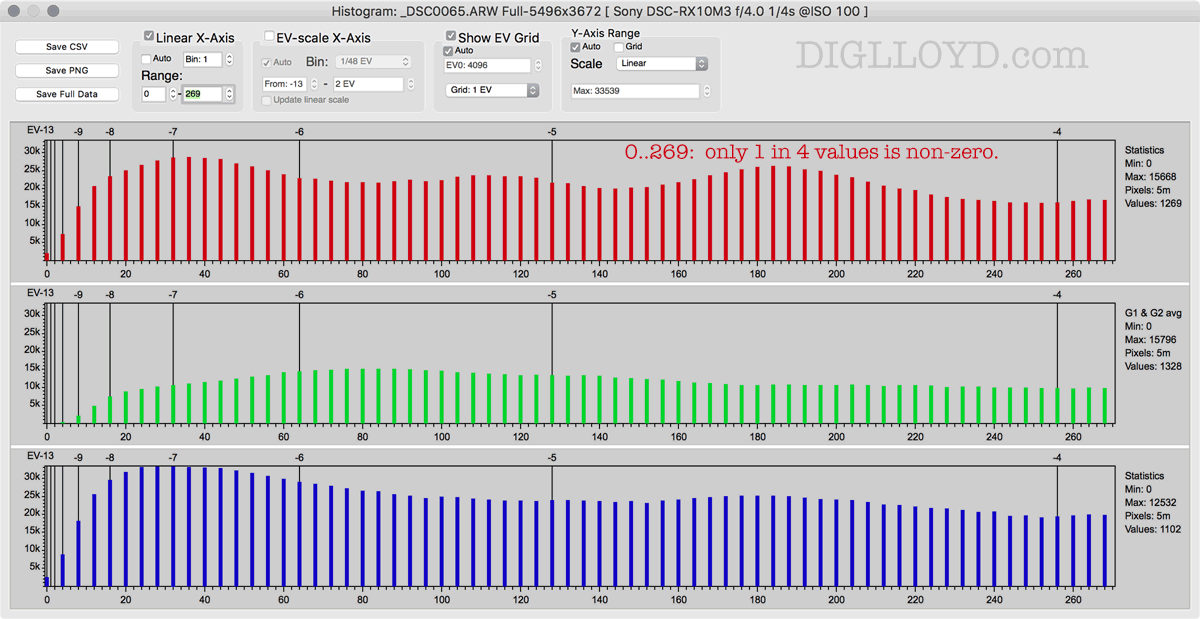

RawDigger displays 'Values' data on the histogram area. This is count of different non-zero bins in histogram. For true 14-bit images it should be ~2^14 (or slightly less due to non zero black level).

On the Sony RX10 III it is only ~1300 or so, so only ~11 bits are used.

... So, this camera is effectively 12-bit (in shadows) and lossy compressed in highlights (so full levels count is not ~4096 minus black level, but only ~1300 or so, slightly above 10 bit in 'pure bit count' terms).

Our article RawDigger: detecting posterization in SONY cRAW/ARW2 files describes sony '11+7' format in depth including contrast tone used (starting from 'Inside Sony cRAW chapter), the difference from A7 family is 'native' 12-bit ADC, so only 1 of 4 level is used in shadows ( only 1 of 2 for A7/compressed because of format nature)