Reader Question: Diffraction

Henry D writes:

Is it true that diffraction problems are primarily due to the sensor and not the lens?

DIGLLOYD: not true—just the physics of light. Diffraction for any given lens and aperture is an invariant. The sensor or the film see whatever light falls upon it.

But it is true that a sensor with more megapixels will show the effects of diffraction more readily on a per-pixel basis—it is recording more detail after all. But in no way does this mean that the 50MP camera is less good than a 22MP camera. The 50MP camera records more detail to even f/16, avoids staircasing and aliasing better, etc. The 50MP camera at the same aperture records the same image projected by the same lens. It is an issue of per-pixel acuity that diffraction degrades. But f/8 at 50MP is *lot* more sharpness than f/8 at 22MP.

For a full frame 50MP camera, diffraction is detectable at f/5.6, but a non issue. At f/8 it starts to dull the image somewhat, and quite a lot of dulling at f/11. (JPEG shooters may disagree since most in-camera JPEGs are already marginalized for fine detail).

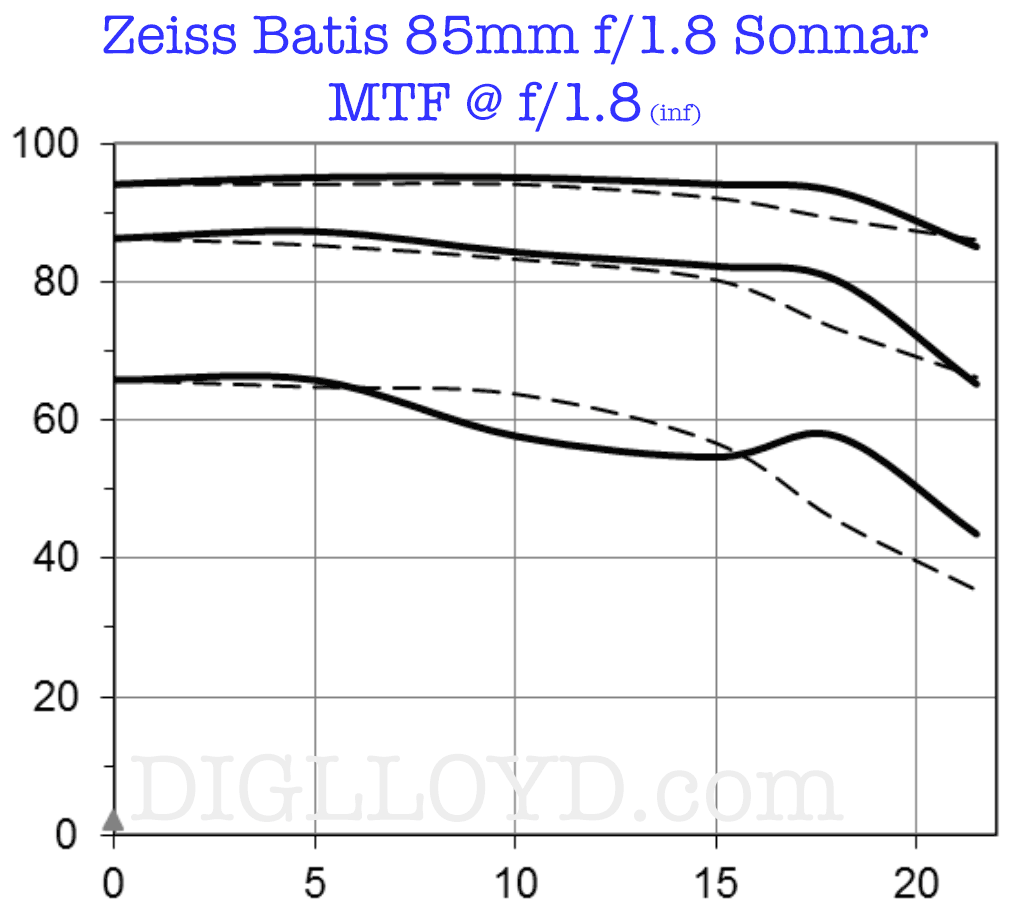

But more than fine detail is lost from diffraction; overall image contrast (brilliance) is dulled considerably; see the full apertures series for the Zeiss Batis 85mm f/1.8 Sonnar; observe that contrast at all size image structures is hugely degraded at f/16 (lower at f/16 than f/1.8).

Graphs courtesy of Carl Zeiss

Here’s an actual series with on a low-res camera: imagine what happens going to 50 megapixels from 18 megapixels! At some point, stopping down is a net loss (depth of field vs diffraction) and the game is over.

(Coastal Optics 60mm f/4 UV-VIS-IR- APO Macro, Canon 1Ds Mark II)

With a 22MP camera, diffraction is only beginning to be visible at f/8 (speaking in terms of contrast for very fine details for both on a per-pixel basis). But in reality if one takes that 50MP image and downsamples it to 22MP, there is no difference whatsoever in diffraction effects. The issue is that users of a 50MP camera are (unrealistically) hoping to get 2.3X as many pixels of the same sharpness per pixel. This cannot happen, at least not with the lens stopped down past 5.6 or so (on a 50MP full-frame sensor). And the lens must perform at a very high level in the first place, e.g., Zeiss Otus or Zeiss Batis.

Another issue that tends to confuse is the relationship between depth of field and aperture and focal length for different format sizes, and how those relate to the physical size of the photosite (pixel) on the sensor. See Format-Equivalent Depth of Field and F-Stop in Making Sharp Images.

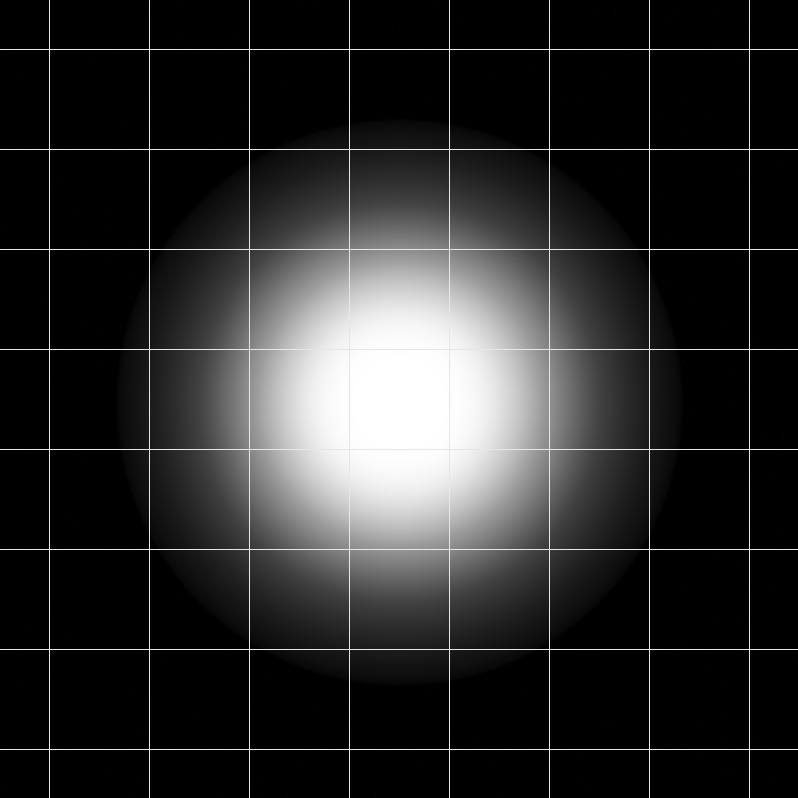

(diffraction is much more complex than this illustration)

The core issue is the size of the blur circle (Airy Disc) vs the size of the photosite. That blur circle enlarges with stopping down. At some aperture, the blur circle grows larger than the photosites on the sensor, and so the sensor then resolves more than the lens delivers (because the light is spread out by diffraction at that aperture).

For example, on both the 36MP Nikon D810 and 50MP Canon 5DS R, f/16 is heavily degraded, with destruction of the finest details. f/22 is awful**. Acceptable images can be made at f/16 on those cameras*, but there is a loss of fine details no matter how much sharpening is applied.

As shown at right, if the lens is stopped down too far, at some point the Airy Disc begins to exceed the size of the photosite. It is no longer possible to capture fine details in terms of the resolving power of the sensor. At ultra-degraded f/22, the 50MP Canon 5DS R will minimally more detail than the 22MP Canon 5D Mark III. But this has always been the case, even with film.

* Diffraction is complex (a series of waveforms), but a useful simple model is a blur circle size which grows with stopping down.

** Which makes me laugh at those captions in photo magazines with images that would have been optimal in all ways at f/5.6, but are instead turned to mush at f/22 and then published at a tiny size. f/22 is a great way to make a mediocre print even at a modest size.

See the following in Making Sharp Images:

- Diffraction pages

- Effects of Diffraction Blur on Color Aliasing

- Mitigating Micro Contrast Losses from Diffraction Blur