Adobe Camera Raw Enhance Details Feature: Integrated into my Workflow... How Much Does it Use the GPU?

Update: my testing shows that a slow integrated chipset GPU versus fast GPU makes a huge difference, about 8X slower on the 2018 Mac mini with its built-in Intel UHD Graphics 630 GPU versus the 2017 iMac 5K. This argues strongly against the 2018 Mac mini for photographers and argues in favor of getting a fast GPU, but it does not really clarify how much a "good/better/best" GPU choice on high-end machines impact performance.

...

The Adobe Camera Raw Enhance Details feature is now a core part of my image processing pipeline—always a win, never a loss seen yet.

What I am wondering and without a good answer yet is just how much a (claimed) faster GPU can benefit things. Shown below, CPU usage is modest and GPU usage is substantial (roughly 70%), but far from maximal. That implies a bottleneck such that a faster GPU would not be able to contribute as much s one might hope for.

My understanding is that the Adobe Camera Raw Enhance Details feature is GPU intensive (not all GPUs are supported), which might mean that upgrading the GPU is worthwhile. Or it might not. Until I can get a faster GPU and a slower one in the same type of machine, I cannot quantify the advantage or lack thereof. I suspect a small benefit (no more than 30%), but it quite possibly could be less than 10%.

Note that “GPU intensive” might not include the surrounding context (CPU and disk I/O), so gains in GPU speed do not necessarily translate into similar gains in time required to complete a task. Put another way, a GPU that is 30% faster might correspond to gains of 30%, or it might correspond to gains of 10%, in terms of the actual time saved.

See my discussion of recommended 2019 iMac 5K features in Apple 2019 iMac 5K: Two Hits with One Big Miss and also Reader Question: Radeon Pro Vega 48 GPU Upgrade for 2019 iMac 5K.

Continues below...

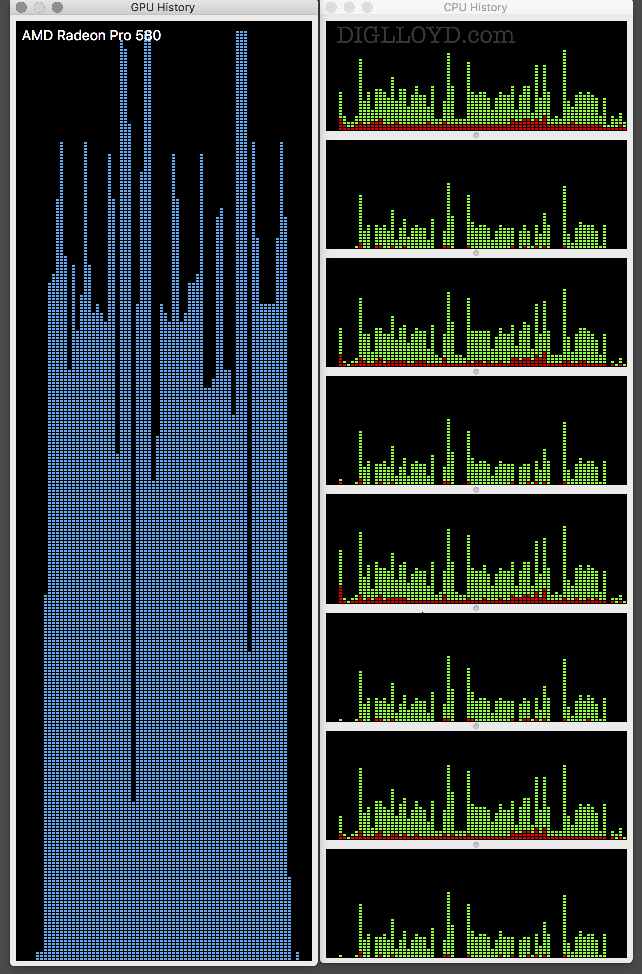

Shown below are GPU and CPU history usage on the 2017 iMac 5K 4.2 GHz with Radeon Pro 580 8192 MB while converting 97 Panasonic S1R raw RW2 files to enhanced versions.

CPU utilization averages about 1.5 CPU cores and GPU utilization averages roughly 70%. Why are CPU cores not utilized better (complicated to code for CPU cores and GPU also?) and why is the GPU not utilized at 100% ? Good I/O algorithms can eliminate I/O as a factor, and ideally both GPU and all CPU cores could be put to use as computational resources.

2017 iMac 5K 4.2 GHz with Radeon Pro 580 8192 MB

Below, the GPU and CPU utilization on the 2018 Mac mini. GPU utilization is 100%, excepting blips which are presumably inter-file blips to read and write data. The runtime per raw file on the 2018 Mac Mini with Intel UHD Graphics 630 GPU is approximately 8 times slower than on the 2017 iMac 5K.

2018 Mac mini 3 GHz Intel Core i5 with Intel UHD Graphics 630

Robin D writes:

Lloyd you comments about GPU load are topical for me. The enclosed is a copy of my GPU load monitor which shows an approximate 96-100% load on my NVIDIA GeForce GTX i1070 - a highly rated GPU - for around 5 seconds on a 43MPix ARW file. Processing 1000 of such files is a significant bottleneck, especially as LR seems to bog down on batch processing. CPU load was low at around 14%. Friends told me I bought a GPU that was too powerful. Obviously not.

DIGLLOYD: the load makes sense to me given what Adobe has said about GPU usage for this feature, and the observations above.

But it does not necessarily follow that Robin’s choice of GPU is a better choice than another—to do that one has to compare actual performance to get the task done. For example using the 2019 iMac 5K, the +$450 AMD Radeon Pro Vega 48 versus the AMD Radeon Pro 580X. Is there a 5%, 10%, 30%, some other difference? I hope to have an opportunity to test and see.