Sensor Bifurcation, Stitched Sensors: More Insight From Readers

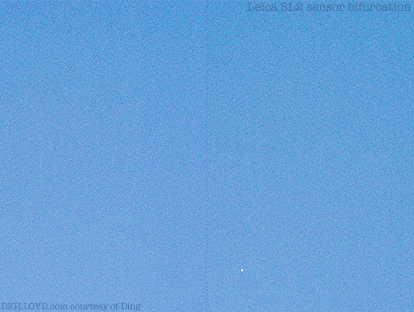

See my detailed post on the Leica M10 Monochrom sensor bifurcation defect, which damaged all of my Eureka Dunes images.

Dan Llewelyn of MaxMax.com writes:

After talking to a few sources that really know what they are talking about, all FF sensors are stitched - at least with current technology. And one of these sources sells a lot of ITAR stuff so, while maybe there some super secret military foundry out there, APS is the current limit.

The stitch line is always visible if you don’t process it out; could be very subtle, but it’s there. For very large pixels it might not be visible, but that’s probably sensors with >10-20um pixels.

Modern steppers are ~30x30 these days. To step a 35mm device in 2 shots you need ~28-30 in one direction (24mm active area + dark pixels + periphery column circuits + bond pads =~28 minimum).

So, I think my initial guess is correct. The line you are seeing on the Leica M10M is the stitching line and more apparent because of the lack of CFA, AA and the debayering which would smooth things out. But Leica needs to do a better job in processing that stitch. And part of the problem might be is that they are using an older, less expensive foundry that doesn't stitch as well (pretty likely IMO).

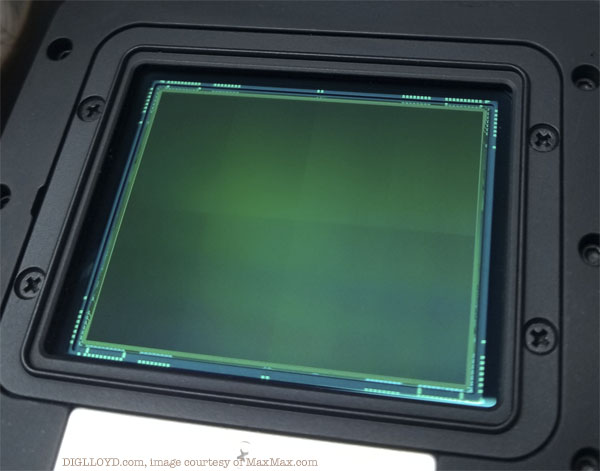

BTW, on a sensor like the Nikon D800, Nikon D810 and Nikon D850 - there is *no* visible stitch line - at least I can't see it when I look at the sensor.

My guess is that the camera manufacturers really don't want that fact out-of-the-bag. Otherwise, next thing you know, you will have pixel peepers taking pictures of white skies are processing the hell out of the image to find the line and then sending the camera in for repair because of a 'defective' sensor when in real life, they would have never noticed it.

DIGLLOYD: I never saw any sensor bifurcation on the Nikon D850 Monochrome, which is a color camera with its color filter array removed by Dan at MaxMax.com. If Dan says there is no visible stitch line, I believe him—he has spent a ton of time with some advanced equipment doing these conversions.

Put another way, it might be fairly said that Leica is shipping inferior sensors compared to Nikon. Presumably they could source (at greater cost) better quality sensors. I’d be very curious to test the PhaseOne Achromatic to see if any sensor bifurcation is seen.

Roy P writes:

I finally have a definitive answer. These larger sensors are optically stitched together, using multiple reticle exposures on a single wafer. It looks like the fab that makes the Leica sensors for the SL2 and M cameras is using two exposures. Sony maybe processing its 35mm sensors in one shot. But almost certainly, doing optical stitching for the medium formats.

My friend says overlay is a non-issue with a 3.x micron pitch, and that is very true. However, I couldn’t see how that would work for the peripheral logic (DRAMs, sense amplifiers, line drivers, registers where the data is latched, etc.) The obvious answer is, they must be making this part of the chip also using big geometries, i.e., not 14 nm or 10 nm, maybe more like 130 or even 250 nm, perhaps.

DIGLLOYD: there is surely some quality variation at different fabs. Leica is probably trying to keep costs low, and also might be accepting sensors of lower grade—how else to explain an obvious flaw that has damaged all my images? For that matter, I wonder if the white-dot pimples are some other kind of sensor flaw, and also unfixable. Leica went radio-silent wen I reported the bug with the Leica M Monochrom Typ 246—there’s some dirty little secret that is not discussable, it seems.

Roy P continues:

...what is interesting is, in theory, they should be able to use this exact same process to make 8” x 8” sensors on a 300 mm wafer. That is the largest square you can fit into a circle with a 300 mm diameter, which therefore also means that’s the minimum image circle you would need the lenses to generate for a camera with such a big sensor. So even if wide angle lenses are impractical, normal (say, 50 mm equivalent) lenses.

The Voigtlander 50 APO for Sony has a front element that looks about 1” diameter. So for a camera with an 8” x 8” sensor, it should be possible to build a 50 mm equivalent lens with a front element of perhaps around 8” diameter. Assuming the lens-making cost scales geometrically, such a lens should cost about 64x what the CV50 APO costs, meaning about $68,000. Which is not really too bad!

Unfortunately, the sensor would probably cost $300,000, assuming the sensor in the Phase One IQ4 150MP backs cost about $15K (200 mm x 200 mm vs. 54 mm x 40 mm). So it should be possible to build a ~$400,000 camera with an 8” x 8” sensor, with a resolution of about 1.2 gigapixels, with a Voigtlander 50 APO Lanthar quality lens!

DIGLLOYD: a 50mm lens on a sensor that size would be a 8mm field of view equivalent. I don't think such a lens is feasible, and the ray angle issues would be godawful. I’d want something more like 135mm, which would be about a 25mm field of view equivalent. It would need to be f/8 or so to be practical.

image courtesy of MaxMax.com