Reader Comment: Pixel Shift vs Frame Averaging Complexity + How To Fix Motion Issues with Pixel Shift

re: pixel shift and multi-shot high-res mode and frame averaging

re: Nikon Z8

Reader Glenn K writes in respons to Nikon Z8 Pixel Shift Testing Soon:

Pixel shift is complex to implement and very limited in applicability. I have never found it usable in landscape work... things are always moving.

Frame averaging, on the other hand, should be trivial to implement and has wide applicability.

I am increasingly convinced that no one at camera companies actually takes pictures.

DIGLLOYD: agreed that "no one" at camera companies actually takes pictures*, and it shows in the oft-botched design of every brand, both in ill-conceived user interface and in lack of features that are trivial to add but massively useful eg frame averaging.

* And the legions of coin-operated reviewers do not give call out badly done features or key missing features, so where exactly do camera companies get competent user feedback that isn’t filtered through 5 levels of clueless (about usability) management?

Frame averaging

Consider that you could be a landscape or studio or product or similar photographer, and have an efective ISO 6 or similar noise level in all your captures with a trivial change to camera firmware. The engineers who never shoot pictures can’t figure that out? At some point you have no recourse but to be really pissed off at this design incompetence.

As for frame averaging, the mindlessness of low-hanging fruit left to rot is hard to fathom: frame averaging can be trivially implemented as a variant of interval shooting and/or multiple exposure, with an interval ofsaving one single-shot frame and one average-of-N frame. Instead we get an arbitrary capture interval delay of one second, and no averaging. Even if you had to average the frames yourself, having an option would allow a rapid capture.

That Nikon has lost its wits is evident in the Nikon D850 RAW-file averageing capability being removed from the mirrorless cameras... WTF? It was tedious, but at least you could do it right there in the camera.

Pixel shift Pixel shit

Pixel shift: I am unsure what Glenn means by “complexity”. The core behavior is trivially simple: shift the sensor and save the frames. Maybe Glenn is referring to the Panasonic S1R style multi-shot high-res mode, which applies computation-intensive sophisticated processing to deal with motion etc, and does it in camera for a final output file ready to process. The motion problem is complex to solve, though not the shifting/capture itself.

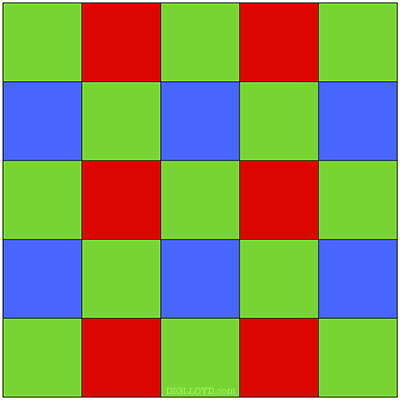

As I wrote a few years ago: one trivially-easy way to sidestep the problems with pixel shift motion issues is to take N pixel shift frames, but in a cyclical manner, then average all of them. In that way, out-of-register subject motion would be greatly reduced.

Motion-tolerant pixel shift?

You can see this for yourself: manually take N pixel shift shots of something moving, say 4 frames. Then use frame averaging on them. Try it!

To spell this out: use a variant of frame averaging to implement motion-tolerant pixel shift.

Call the frames of a 4-shot pixel shift 1/2/3/4. For a motion-tolerant pixel shift capture, take some configurable repeat count, say 6 times:

1/2/3/4 <== pixel shift attempt #1

1/2/3/4

1/2/3/4

1/2/3/4

...

1/2/3/4

1/2/3/4 <== pixel shift attempt #N

A1/A2/A3/A4 <== AVERAGE of each of the captures for each photosite

Note that capturing these differential values could lead to sophisticated corrections based on varying pixel values over time! Averaging is a crude approach but trivial to implement.

Any lighting change or subject movement will be minimized by averaging the value of each photosite. Process the pixel shift images, then use frame averaging on them. Existence proof. No, it will not fix major movement, but it might deal with the little stuff, which is good enough to hugely expand applicability.

Below, I tried this idea—it does minimize the ugly color artifacting, but with this scene having excessive movement everywhere, there is no hope of avoiding motion blue. But it is proof of concept for exploring this idea. And surely the additional frames could be usef for more sophisticated processing.

Maybe I’m wrong and this won’t be a viable approach. For one thing, it also assumes that the IBIS mechanism can repeat its positioning to within 1/10 of a pixel or so. And I suspect that the Sony A7R V cannot do better than about 1/2 pixel, which would explain its relatively disappointing results in outer zones.

But some variant of it might work, and the multi-shot high-res mode of the Panasonic S1R is an existence proof; see for example Multi-Shot High-Res MODE1 vs MODE2 with Moving Subject Matter.

No motion correction used; pixel shift via PixelShift2DNG